Download the repository and navigate to the project directory:

git clone https://github.com/iMED-Lab/Ultra.git

cd Ultra

It is recommended to create and activate a dedicated Conda environment for the framework:

conda create -n ultra python=3.11 -y

conda activate ultra

Install the required dependencies and the framework itself:

pip install -e .

Note: Make sure to configure your nnUNet paths as described in the official instructions before executing these commands.

To train the model, specify the dataset ID, configuration, and fold.

ultra-train <dataset_id> <configuration> <fold>

Example: ultra-train 888 2d 0

To generate Artery/Vein (A/V) predictions using your trained models, use the following command:

ultra-predict -i <input_folder> -o <output_folder> -d <dataset_id> -c <configuration|default=2d> -f <fold>

Note: The checkpoint with the best performance during training will be automatically selected as the default for prediction.

The core implementation for our neighborhood connectivity encoding is located in ultra/utilities/to_neighbor_connectivity.py. This module captures multi-scale spatial relationships to preserve structural continuity.

Key Components:

-

to_nk_maps(Entry Point): Aggregates neighborhood connectivity maps across multiple receptive fields (e.g., kernel sizes of 3, 5, and 7). -

nk_encode: Computes the connectivity encoding for a specific kernel size$k$ . It evaluates the structural continuity between a center pixel and its surrounding neighborhood. -

bresenham_line: An underlying utility that implements Bresenham's line algorithm to accurately determine the discrete pixel path between two points during the connectivity check.

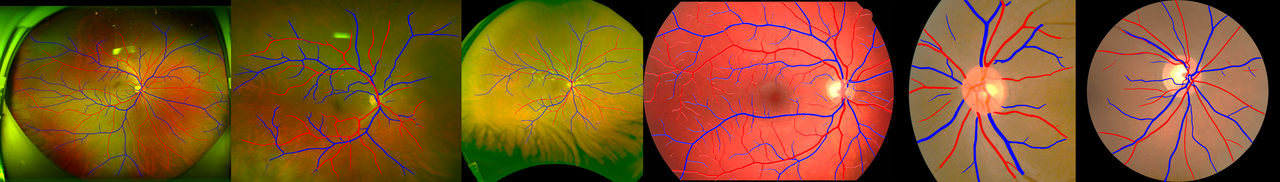

Pre-trained model weights are available for download on CFP-AV, UWF-AV, CFP+UWF-AV. To perform inference, please download and place the model folders into the directory specified by your nnUNet_results environment variable (refer to the nnUNet instructions for setting up paths for more details).

Our paper is currently under review. Citation details are coming soon!

We would like to extend our deepest gratitude to the following contributors and organizations whose support has been instrumental in the development of the Ultra framework:

- The brilliant developers and maintainers of nnU-Net v2, whose robust segmentation framework heavily inspired this project.

- The broader research community in retinal imaging and medical image analysis for continuously providing valuable insights and high-quality benchmark datasets.